article> Science

What is quantum computing?

Quantum computing is about to start a technological revolution. Here, we will tell you how and why!

Originally written for the CEBE blog by Daniel Pérez

Contributing Writer in collaboration with CEBE & postdoctoral researcher at KU Leuven - IMEC

We are surrounded by information. Constantly. Any sound or image can be decomposed into a series of fundamental information units that can be reconstructed if we have the appropriate tools. For example, sound is actually the vibration of air molecules around us. By using a cell phone, we can capture these vibrations and transform them into ones and zeros, which are stored in the memory of our phone. When we want to hear the recorded sound again, we just have to send the sequence of ones and zeros to a speaker and voilà, we can listen to the sound again.

These units of basic information are known as classical bits, and they are represented by two possible states: one and zero. Using transistors to implement classical bits, we can build computers, which in turn allow us to communicate with our family members over the internet, book airline tickets, or perform mathematical calculations that would otherwise take thousands of years.

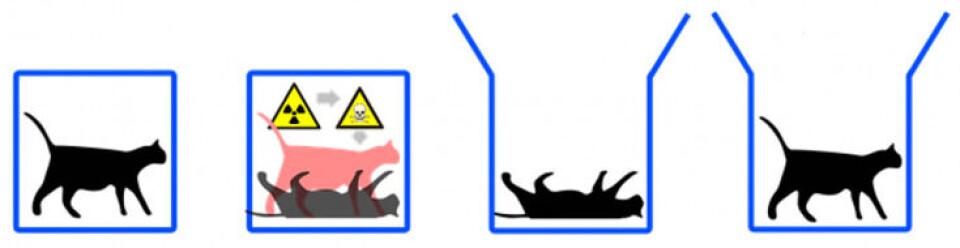

However, what if classical bits followed the laws of quantum mechanics? What if they could behave like Schrödinger's cat, except that instead of being alive and dead at the same time, they could take the value zero and one simultaneously?

The bits that follow the laws of quantum mechanics are known as qubits, and they are the fundamental unit of a quantum computer. Quantum computers can take advantage of different phenomena that only occur in the quantum world, such as superposition, interference or entanglementhttps://en.wikipedia.org/wiki/... to solve certain problems much more efficiently than a conventional computer.

For example, the cryptographic system that protects us when we surf the internet is called RSA, and it is based on the use of prime numbers. If I give you a very large number, let's say a number that has about 600 digits, and I ask you to find the prime factors, how long do you think it will take to find them? Well, I can tell you: 80 million years minimum. This same problem would take just eight hours of computation on a quantum computer.

Another field where quantum computing promises significant improvements is in the design of drugs and new chemical compounds. Today, simulating molecules or protein folding requires the use of approximations, which limits the precision and the type of simulations that can be performed. However, a quantum computer can be used as a simulator, in such a way that it is possible to simulate the molecule without any approximation.

And this does not stop there: finance, optimization or machine learning are other fields where quantum computing can bring great advantages.

Despite having the potential to revolutionize different industries, quantum computing is still in its late teens. Today we are in what is known as the NISQ (Noise Intermediate-Scale Quantum computers) era, that is, computers with between 50 - 100 qubits with imperfections. This makes running algorithms that involve many steps unfeasible due to the errors that accumulate at each step. Furthermore, quantum computers still cannot solve any problems that we consider useful.

But there are reasons to be optimistic. Companies like Google, IBM or Honeywell have invested a lot of resources and efforts in this technology. Currently, all these companies have quantum computers that are accessible to the public through the cloud. Even Google in 2019 achieved a historic milestone: it performed a calculation on one of its quantum computers in just 200 seconds that would have taken around 10,000 years on the largest existing supercomputer.

In addition, so far this year alone, $450 million of private capital has been invested and Google, IBM and Honeywell have announced their plans for the next few years. Without a doubt, very interesting years await the world of computing and informatics.

For more regular content

- Follow us on Facebook: https://www.facebook.com/thevoice.loko

- Check out our Instagram page: https://www.instagram.com/thevoice.kuleuven/

- Listen to our podcasts on: https://www.mixcloud.com/The_Voice_KUL_Student_Radio

For submissions or applications

- Email us at thevoice@loko.be

- Or message us on Facebook